{Podcast} Who Decides 2025: Global CEOs Reveal Where AI Can (and Can’t) Be Trusted to Decide

TL;DR

-

0% of senior leaders trust AI with hiring or strategy. Zero. The line is drawn.

-

Hybrid dominates: “AI suggests, human confirms” wins 3:1.

-

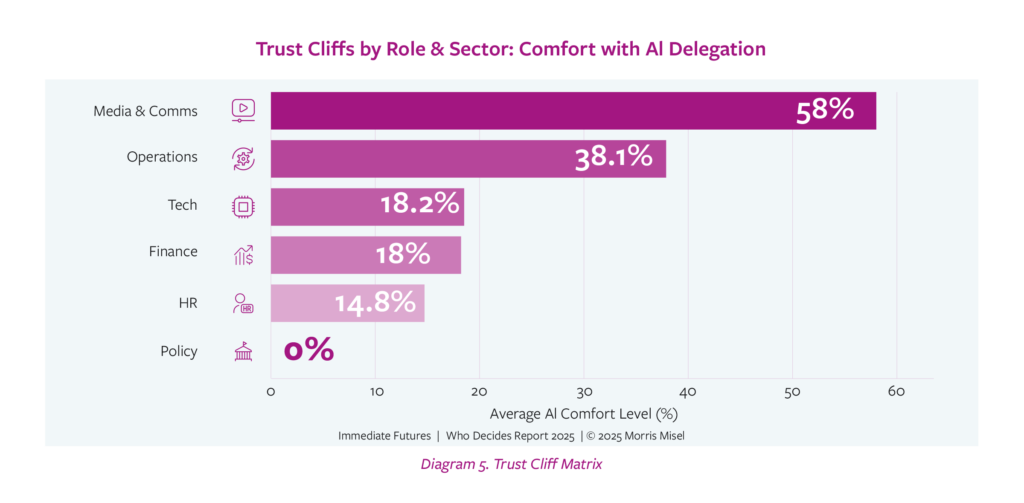

Comfort cliffs are real: Media execs 58% vs Government 0%.

-

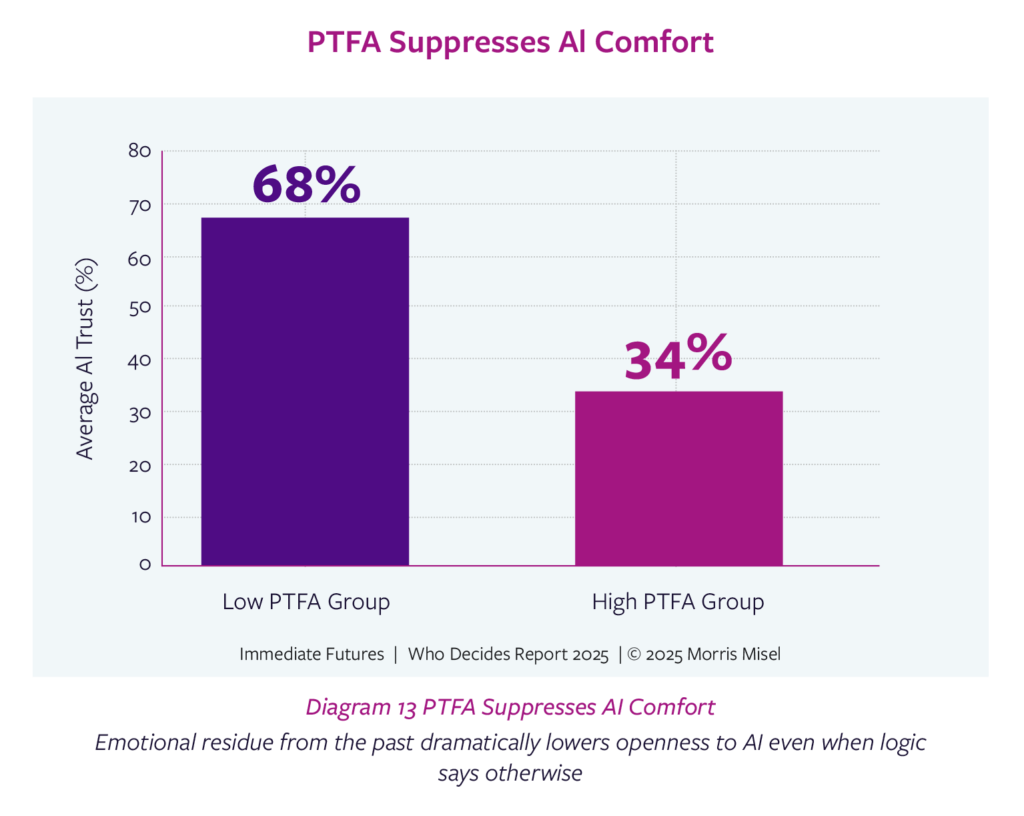

PTFA (Past Trauma / Future Anxiety): suppresses trust by 60%.

-

Built from conversations with 120 senior leaders across Australia, the United States, and beyond.

-

This is the first foresight benchmark of how far leaders will really let AI decide — and where they never will.

👉 Download the full report here

Will AI steer the future or will human hands stay on the wheel?

That’s the question on the cover of Who Decides 2025. Two hands gripping a steering wheel. Because at its core, this is about control.

And the answers? Brutal. Revealing. Startling.

-

0% of leaders trust AI with hiring or strategy.

-

Only 15% would let AI plan a family holiday.

-

29% are fine with AI managing their superannuation or 401(k).

-

Media executives are 58% comfortable. Government? Zero.

These aren’t theories. These are real leaders, real decisions, real lines in the sand.

I asked 120 senior leaders across Australia, the United States, and beyond, from finance and technology to healthcare, retail, government and more, where they would let AI decide for them, and where they would never hand over the wheel.

Here’s what they told me.

Look at the Numbers

-

0% of Government & Policy executives allowed AI to decide on capital spend.

-

62% of women in Operations were comfortable letting AI manage investments — the single highest subgroup score recorded.

-

HR leaders were the most AI-sceptical: only 15% trusted AI on any hiring step.

-

Gen Z execs were 1.7× more AI-positive than Boomers — but still shut the door when decisions touched identity or ethics.

-

Hybrid dominates everywhere: four times as many leaders chose “AI suggests, I confirm” over “AI decides outright.”

This is the Comfort Cliff in action. The moment money, people, or reputation enter the frame, trust collapses.

Industry Shocks

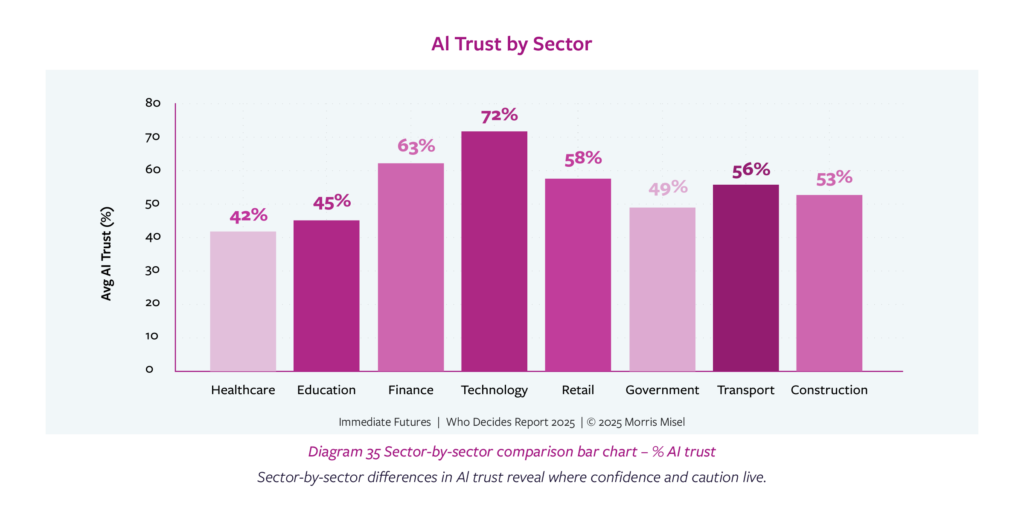

Different industries drew very different lines.

-

Media & Communications: 58% average comfort. Algorithms already shape content, pricing, scheduling. Leaders here treat delegation as normal.

-

Government & Policy: 0%. Not hesitation a flat refusal. Regulation, risk, and reputation froze them.

-

Finance & Insurance: More open on investments and pricing, but slammed the brakes on hiring and ethics.

-

Healthcare & Biotech: Trust AI to crunch data, but never to make the final call.

-

Tourism & Hospitality: 3% comfort. The strongest human veto of any sector.

This isn’t just data. It’s a cultural fingerprint of trust.

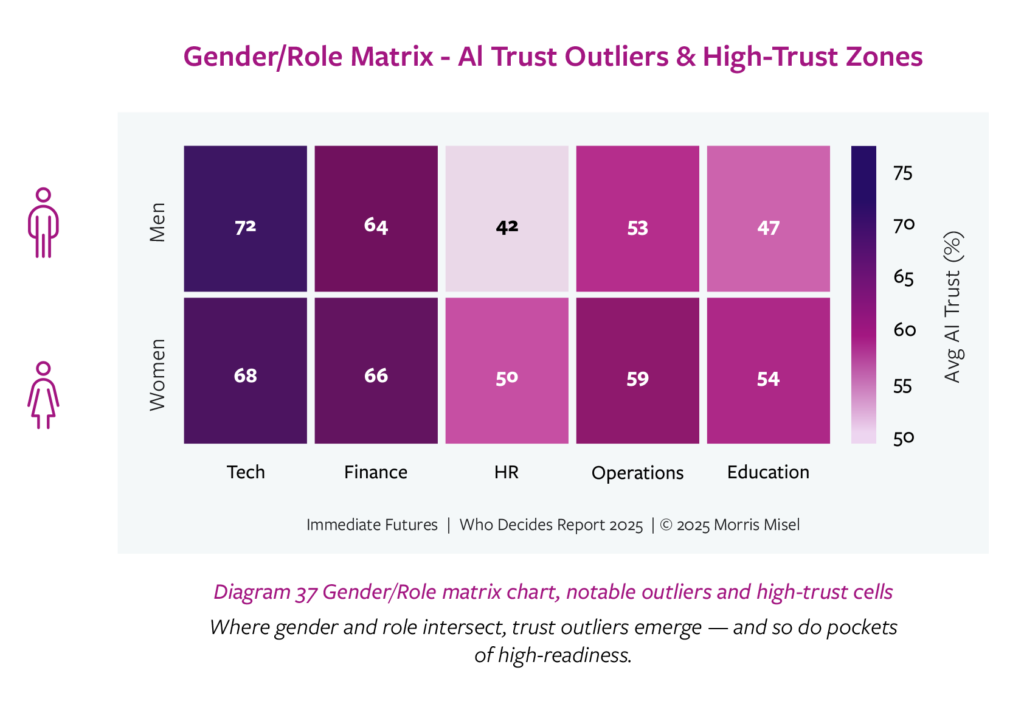

Gender and Generational Surprises

Forget the clichés. The data doesn’t match the stereotypes.

-

Women overall were six percentage points more open to AI than men, especially in finance decisions.

-

Gen Z executives were more positive until the decision touched ethics, identity, or reputation. Then they were as cautious as everyone else.

-

Non-binary leaders recorded the lowest overall trust (8.3%) but showed willingness on specific, data-heavy tasks.

So no, this isn’t about age or tech-savviness. It’s about context and consequence.

PTFA: Past Trauma / Future Anxiety

I built the PTFA Index to capture the emotional drag that no algorithm can see.

Leaders with high PTFA scores showed 60% lower trust in AI.

-

Past rollouts that failed left scars.

-

Future anxiety about reputation or liability froze decision-making.

-

The silent stall point? Middle managers. They scored highest PTFA, lowest trust.

This is why transformation fails. Not because the system doesn’t work, but because trust does.

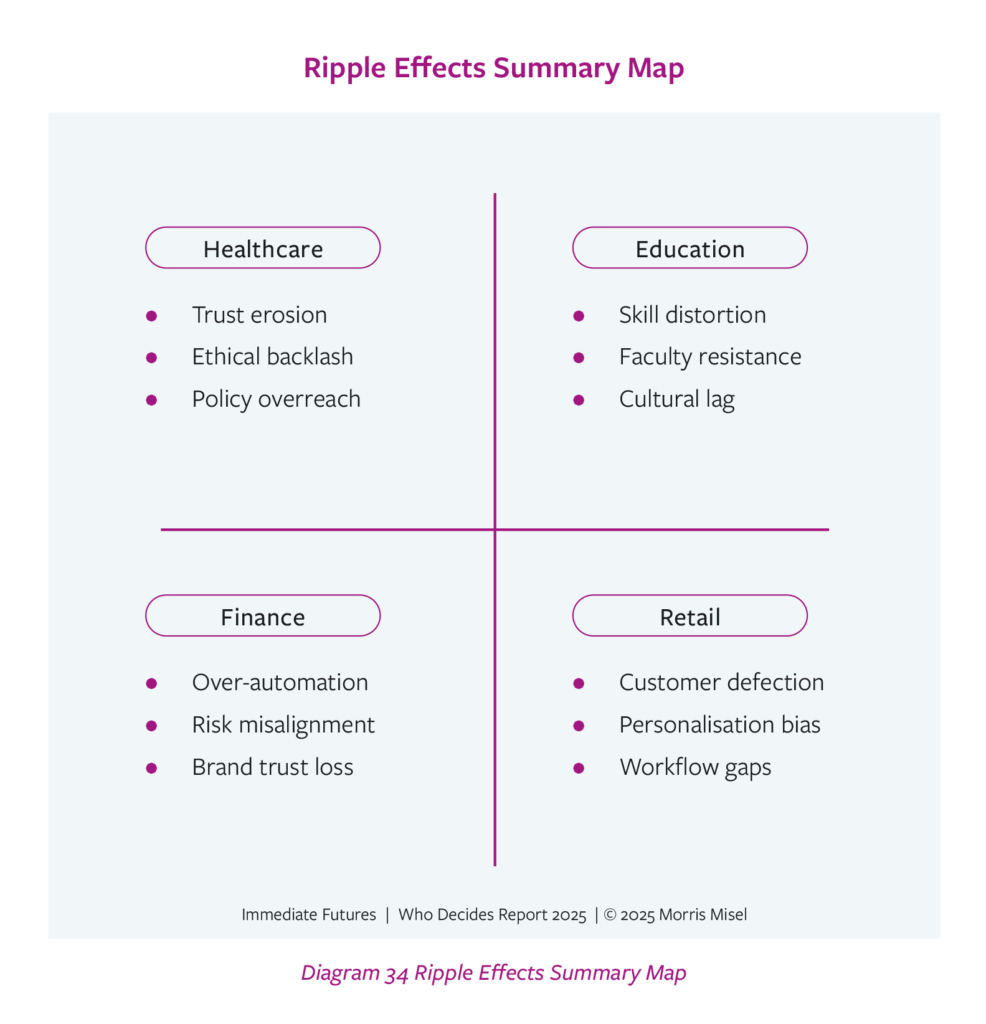

Ripple Effects

Every AI decision ripples.

Hand over a small decision, and it cascades:

-

Into culture (who feels trusted).

-

Into brand (who holds the voice).

-

Into compliance (who carries liability).

-

Into trust (inside and outside the organisation).

Every AI decision ripples beyond the task into culture, brand, compliance, and public trust.

Leaders who ignore the ripple effect pay the price.

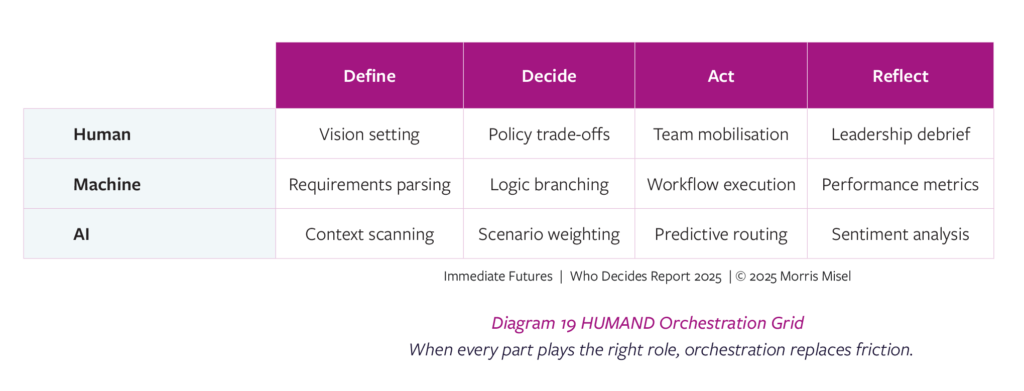

HUMAND: The Orchestration Model

So how do we deal with this?

I built HUMAND, a foresight framework to orchestrate Human, Machine, and AI roles.

-

Humans: Frame intent, ethics, context.

-

Machines: Execute repeatable processes.

-

AI: Explore alternatives and probabilities.

-

Navigation & Design: Align trust and clarity.

The sweet spot? AI crunches. Humans narrate and sign off.

How to Use This Report

I didn’t build Who Decides 2025 to sit on a shelf. I built it to be used.

Here’s how leaders are already working with it:

-

Using PTFA to surface hidden resistance.

-

Reassigning decision roles with HUMAND.

-

Anticipating ripple effects before rollout derails.

Practical next steps:

-

Print the Decision Trust Zones quadrant. Bring it to your next board meeting.

-

Ask your team: where would you never hand over control?

-

Pilot one low-risk shift, from human-only to hybrid, in the next 90 days.

-

Build human override points into every AI workflow.

-

Use PTFA to map resistance before it stalls change.

Why I Built It

For more than 30 years, I’ve helped leaders navigate disruption across 160 industries and five continents.

I’ve always said: “The future isn’t about prediction. It’s about preparation.”

But in 2025, I saw a blind spot. AI wasn’t just supporting decisions.

It was already making them, quietly, invisibly.

And no one was asking: “Where do we let AI in, and where do we refuse?”

So I built Who Decides 2025.

The first foresight benchmark of its kind.

A pulse of real C-suite voices from Australia, the United States, and beyond.

It’s not commentary. It’s data. It’s foresight. And it’s designed for you to use.

Final Thought

Every decision nudges a direction.

Every handover carries a ripple.

And in the age of AI, the line between human and machine is being drawn, whether you notice it or not.

So the question is no longer “can AI decide?”

The question is: “Who decides, and when do we let go of the wheel?”

👉 Download the full report here

👉 Book me for a keynote, workshop, or executive session to start Choosing Forward, using this report and my foresight frameworks to shape your 2025 and 2026 strategy with clarity, confidence, and courage.

Because the leaders who act on this now will own the future.

Choose Forward.

For more on my Who Decides 2025 research report listen to Hong Kong Radio 3’s Phil Whelan and I chat about it live on-air, in this week’s catchup on all things future (17 minutes)

Frequently Asked Questions

What is the Who Decides 2025 report?

Who Decides 2025 is a foresight research report I commissioned and led, built from conversations with 120 senior leaders across Australia, the United States, and beyond. It is the first global benchmark of how far executives are willing to let AI make decisions — and where they draw the line.

What are Decision Trust Zones?

Decision Trust Zones are a foresight framework I created to map comfort levels with AI decision-making. They range from “No-Brainers” (routine tasks) to “Humanity Rules” (ethics, hiring, strategy). The zones reveal where leaders delegate to AI, where they co-pilot, and where they never let go of control.

How does PTFA affect AI adoption?

PTFA stands for Past Trauma / Future Anxiety. My research shows that leaders with high PTFA scores are 60% less likely to trust AI. Past change failures scar confidence, and fear of future risk stops adoption. PTFA is the silent emotional veto in every boardroom.

What is HUMAND?

HUMAND is my orchestration model for the future of decision-making. It balances Human, Machine, and AI roles: humans frame intent and ethics, machines execute repeatable processes, AI explores probabilities, and Navigation & Design ensures clarity. It is a practical way to assign trust and responsibility in an AI-shaped world.

Why is the Who Decides 2025 report important for leaders?

Because it provides not just commentary, but hard data and foresight frameworks to guide 2025 and 2026 strategy. It shows where leaders are comfortable with AI, where they refuse, and how to prepare for ripple effects. It’s a tool to help executives and boards Choose Forward with clarity and courage.

Morris Misel is one of the world’s most trusted futurists and foresight strategists.

For more than 30 years, he has guided leaders across 160 industries and five continents through disruption, not by predicting the future, but by preparing them to shape it.

Heard by millions each year in the media and on stage, Misel is the creator of foresight frameworks including HUMAND™, PTFA™, Decision Trust Zones™, and Ripple Effects. His work helps CEOs, boards, and executive teams build clarity, confidence, and courage in the face of accelerating change.